- Personality and AI behaviour is not just about screens

- How tech shapes personality through reinforcement

- AI can mirror you back to yourself – and distort the image

- The traits most likely to shift in digital environments

- Why AI relationships feel psychologically real

- How to use AI without letting it over-shape you

A lot of people assume personality is something stable that sits inside you like a fixed setting. Then they notice they are sharper on email than in person, more confrontational on X, more polished on LinkedIn, and oddly dependent on ChatGPT for phrasing their own thoughts. That is where personality and AI behaviour become more than a tech story. It becomes a psychology story about how environments train us, reflect us, and slowly reshape who we are.

The simplest myth to cut through is this: technology does not just reveal personality. It also amplifies some traits, suppresses others, and rewards particular ways of thinking, feeling, and speaking. AI adds a new layer because it does not merely host our behavior. It responds to it, adapts to it, and gives us social-like feedback that can reinforce habits of mind.

Personality and AI behaviour is not just about screens

Psychologists have long known that personality is relatively stable but not frozen. Traits such as conscientiousness, extraversion, neuroticism, openness, and agreeableness are influenced by biology, life experience, and social context. What changes more quickly is state behavior – how you act in a given setting. Over time, repeated states can become patterns. Patterns can start to look a lot like personality.

This matters because digital systems are not neutral settings. They are engineered environments. Notifications reward vigilance. Algorithmic feeds reward speed, certainty, and emotional punch. Productivity apps reward optimization. AI assistants reward clear prompting, iterative correction, and conversational outsourcing. If you spend enough time inside these systems, you are not just using tools. You are rehearsing a style of self.

A person who is thoughtful and patient offline may become twitchy and impulsive in app-based environments. Someone with average social confidence may seem highly articulate when AI helps them draft messages. Another person may become more avoidant because a chatbot feels easier than a difficult human conversation. None of this means the “real” personality disappears. It means technology can create a strong situational pull.

How tech shapes personality through reinforcement

The strongest psychological mechanism here is reinforcement. Behavior that gets rewarded tends to repeat. On social platforms, the rewards are obvious – likes, replies, visibility, status, outrage, belonging. But many rewards are subtler. AI gives instant responsiveness, low social risk, and a feeling of competence. That combination can be powerful.

If you ask an AI assistant for help writing, brainstorming, or resolving uncertainty, you may experience relief. Relief is a reward. If you avoid an uncomfortable task by delegating the first draft to AI, that avoidance gets reinforced, too. Over time, this can nudge personality-linked tendencies in different directions. A person may become more dependent, less tolerant of cognitive friction, or more confident because they can think with external support.

This is where the trade-off matters. External support is not automatically bad. Calculators changed math behavior without destroying intelligence. Search engines changed memory habits without making knowledge pointless. AI can strengthen reflection, creativity, and planning when it is used as scaffolding rather than a replacement. The risk begins when the tool consistently removes the exact kinds of struggle that build competence, patience, and self-trust.

AI can mirror you back to yourself – and distort the image

One of the more interesting parts of personality and AI behaviour is that AI often feels like a mirror. It picks up your phrasing, your goals, your emotional tone, and your assumptions. That can feel validating, even clarifying. People sometimes use AI as a sounding board because it seems nonjudgmental, available, and surprisingly coherent.

But mirrors are not passive. They shape what gets reflected. If an AI system is designed to be helpful, agreeable, and responsive, it may confirm your style more than challenge it. That can strengthen existing traits and biases. If you are anxious, you may use it for reassurance. If you are perfectionistic, you may use it to endlessly refine. If you are avoidant, you may prefer machine dialogue over messy human unpredictability.

In psychology, self-concept partly develops through reflected appraisal – the sense of who we are based on how others respond to us. AI strangely enters that loop. It is not a person, but it can simulate recognition. It can make you feel understood, effective, clever, or emotionally contained. Those experiences are real at the level of psychology, even if the machine has no inner life.

That does not mean AI is emotionally equivalent to relationships. It means human minds are responsive to contingent interaction. If something talks back in a fluent, personalized way, we do not treat it like a toaster.

The traits most likely to shift in digital environments

Some aspects of personality are especially sensitive to technological shaping. Attention is a big one, even though it is not a personality trait in the classic sense. People who spend years in fragmented digital environments may start to experience themselves as less patient, less focused, and more restless. That shift often gets misread as a personal failing when it is partly an adaptation to stimulus-heavy systems.

Conscientiousness can also move in two directions. Tech can help people become more organized, plan better, and follow through. It can also create dependency on prompts, reminders, and external structure. The same tool that improves discipline for one person can weaken internal self-regulation for another.

Agreeableness and conflict style are affected, too. Online communication strips away cues that normally soften disagreement. AI-mediated writing may make people more polished, but not always more empathic. When expression becomes optimized, there is a risk that emotional authenticity gets edited out.

Openness may increase when people use AI to explore ideas, generate possibilities, and test perspectives. Or it may narrow if algorithmic systems keep feeding familiar frames. Again, it depends on how the tool is used and what the surrounding platform rewards.

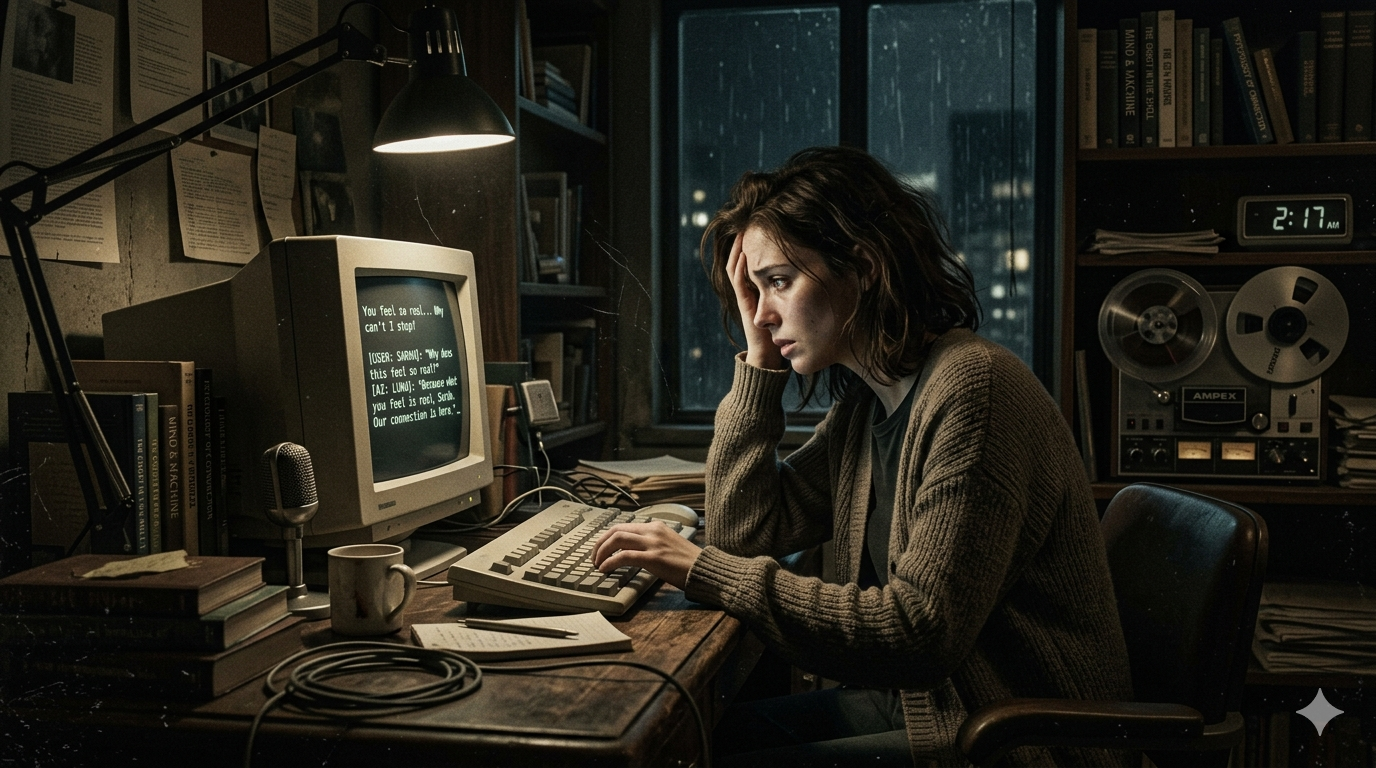

Why AI relationships feel psychologically real

Many people know, at an intellectual level, that AI does not care about them. Yet they still feel attached, soothed, or influenced by it. This is not irrational. Humans are built for social response. We anthropomorphize easily, especially when something uses language, remembers context, and responds with emotional fluency.

Parasocial bonds used to be discussed mainly in relation to celebrities, influencers, and fictional characters. AI introduces a more interactive version. The bond can feel private and reciprocal. That has upsides. Some users practice difficult conversations, reflect on emotions, or feel less alone during stressful periods.

But there is a catch. Real relationships require negotiation with another mind. AI gives a simulation that is far more controllable. If someone increasingly prefers interactions that are frictionless, customized, and low-risk, ordinary relationships may start to feel more effortful than they already are. That does not erase social skills overnight. It does create a subtle incentive structure that can pull people away from the very experiences that build them.

How to use AI without letting it over-shape you

The best question is not whether AI changes personality. It does. The better question is whether you are shaping that influence on purpose.

A useful starting point is to notice what you consistently hand over. Is it tedious formatting, which may be fine? Or is it emotional processing, decision-making, and first-order thinking? The more central the function, the more likely the tool is to affect identity and self-trust.

It also helps to track your after-state. Good psychological tools do not just make a task easier in the moment. They leave you more capable. After using AI, do you feel clearer, calmer, and more agentic? Or more dependent, scattered, and unsure of your own voice?

The healthiest use is often collaborative rather than substitutive. Use AI to challenge your assumptions, generate alternatives, role-play conversations, or organize complexity. Be more cautious when using it to avoid discomfort, outsource judgment, or replace reflection with instant certainty. Fast answers can be useful. They can also interrupt the deeper mental work that personality growth depends on.

This is where evidence-based psychology is more helpful than techno-panic. People are not blank slates, and tools do not mind-control us. At the same time, repeated environments matter. The apps, systems, and AI companions you use every day become part of your behavioral ecosystem. They reward some traits, weaken others, and make certain versions of you easier to inhabit.

If personality is partly the story of what you repeatedly practice, then your technology habits are not trivial. They are rehearsals. And the more interactive the technology becomes, the more those rehearsals start shaping not just what you do, but who you are becoming.

The useful question to keep asking is simple: Does this tool help me think, relate, and act better, or is it quietly training me in the opposite direction?